This post will explain you about History of Hbase and HBase Architecture,Basic details about HBase, Different types of No SQL Databases

History of HBase

Started in Google.

GFS -> HDFS

MapReduce-> MapReduce

Big Table -> Apache HBASE

Any SQL system – RDBMS

1. Users data is increasing, then we will implement cache mechanism to improve performance.

2. Cache mechanism also having certain limlits.

3. Remove indexing.

4. Avoiding joins

5. Materialized view .

If we use above, then advantages of RDBMS has gone.

Google also faced same problem, then they started with Big Table.

For faster performance we use HBase.

What ever the features hive will not support like crud operations, we can do with HBase.

If anything need to be updated in real time access ,HBase if very useful.

Ad Targeting in real time is very faster.

What is Common problem with existing data processing with Hadoop or Hive?

1. Huge Data

2. Fast Random Access

3. Structured Data

4. Variable Schema- will support to enhance or increase the column names at runtime, which is RDBMS is not supported.

5. Need of compression

6. Need of Distribution(Shading)

How Traditional System(RDBMS) will solve this?

Case: If we want to design Linkedin database to maintain connections?

There 2 tables

1. Users – id,Name,Sex,age

2. Conenctions- User_id,Connection_id,type

But in case of HBase, we can save all the details about users and connections in same column family.

Characteristics of Probable

1. Distributed Database

2. Sorted Data

3. Sparse Data Store

4. Automatic Sharding.

Sorted Data

Example : How data stored in sorted way?

1. www.abc.com

2. www.ghf.com

3. Mail.abc.com

When ever user try’s to access abc.com , then mail.abc.com will not be returned in case of normal storage.

If we use sorted storage then data will be stored like below.

com.abc.www

com.abc.mail

com.ghf.www

If we store like above, then it is easy to access the same.

Sparse Data store

This is mathematical term. If there is null value for particular column , then it will not store.

No SQL Landscape

1.Each No SQL databases as mentioned above is same, they have developed for their purpose.

2.Dynamo is developed by Amazon and it available in Cloud. We can access the same.

3.Cassandra developed by Facebook and they will be using the same. It is combination of Dynamo and HBase, all the features available in Cassandra.

Any No SQL database will have all the characteristics.

It will satisfy only two property at the same time.

HBase Definition

It is a non -relational (NoSQL)database, which stores data in key value pair and it is also called as hadoop database.

1. It Sparse

2. Distributed

3. Multi –dimensional (table name,column name,timestamp) etc..

4. Sorted Map

5. Consistent

Difference between HBase and RDBMS

When to use HBase

When not to use HBase?

1. When you have only few thousand or millions records then it is not advisable to use HBase.

2. Lacks RDBMS commands, if our database requires sql commands then also not go for Hbase

3. When we have hardware less than 5 Data Nodes when replication factor is less than 3, then no need of HBase. It will overhead for system

HBase can run in local system –but this should be considered for a development configuration.

How face book uses HBase as their Message System

1.facebook monitored their usage and figured our what they really needed.

What they needed was a system that could handle two types of data pattern

1. A short set of temporal data that tends to be volatile

2. An ever growing set of data that can be accessed rarely.

1. Real Time

2. Key Value

3. Linearly

4. Big Data

5. Column oriented

6. Distributed

7. Robust

8. Scalable

9. Open source

These are the characteristics of HBase.

HBase is using not only facebook.But also twitter,yahoo etc… they will use to process their large volume data.

Major components of HBase

1. The HBase Master

It will store all the Hbase table and it will coordinate

2. The HRegion server

Actual data will be stored in this server

3. The HBase Client

We will interact to do the crud operations and processing the data

It is same like name node in HDFS

How data distribution will happen in HBase?

How HBase will write data to the file?

1.Every HBase requires confirmation from both Write Ahead Log (WAL) and the MemStore.

2.The two steps ensures that every write to HBase happens as fast as possible while maintaining durability.

3.The Memstore is flushed to a new HFile when it fills up.

4.Usally Memstore default size 256MB, once it is filled up then , it move that information to HFile it's default size is 64 KB.

5.It will be act as a immutable object.

HBase Read File

1.Data is reconciled from the block cache, The Mem-Store and the HFiles to give the client an up to date view of the rows which client requested for.

2.HFiles contain a snapshot of the Memstore at the point when it was flushed. Data for a complete row can be stored across multiple HFiles.

3. In order to read complete row, HBase must read across all HFiles that might contain information for that row in order to compose the complete record.

HFile Compaction

All HFiles will be compacted and put as Compacted HFile.

HBase Components

1. Region – a range of rows stored together

2. Region servers- serves one or more regions

a. A region served by only one region server

3. Master Server – Daemon responsible for managing HBase cluster.

4. HBase stores its data into HDFS- Relies on HDFS’s High availability and fault tolerance.

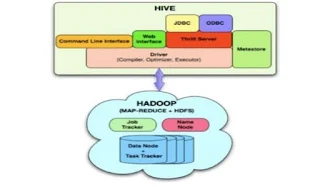

HBase Architecture

This architecture will explain you about how Hbase will work.

This is Basics about HBase. My next post you can see How to install and work with HBase.

Thank you very much for viewing this post.

History of HBase

Started in Google.

GFS -> HDFS

MapReduce-> MapReduce

Big Table -> Apache HBASE

Any SQL system – RDBMS

1. Users data is increasing, then we will implement cache mechanism to improve performance.

2. Cache mechanism also having certain limlits.

3. Remove indexing.

4. Avoiding joins

5. Materialized view .

If we use above, then advantages of RDBMS has gone.

Google also faced same problem, then they started with Big Table.

For faster performance we use HBase.

What ever the features hive will not support like crud operations, we can do with HBase.

If anything need to be updated in real time access ,HBase if very useful.

Ad Targeting in real time is very faster.

What is Common problem with existing data processing with Hadoop or Hive?

1. Huge Data

2. Fast Random Access

3. Structured Data

4. Variable Schema- will support to enhance or increase the column names at runtime, which is RDBMS is not supported.

5. Need of compression

6. Need of Distribution(Shading)

How Traditional System(RDBMS) will solve this?

Case: If we want to design Linkedin database to maintain connections?

There 2 tables

1. Users – id,Name,Sex,age

2. Conenctions- User_id,Connection_id,type

But in case of HBase, we can save all the details about users and connections in same column family.

Characteristics of Probable

1. Distributed Database

2. Sorted Data

3. Sparse Data Store

4. Automatic Sharding.

Sorted Data

Example : How data stored in sorted way?

1. www.abc.com

2. www.ghf.com

3. Mail.abc.com

When ever user try’s to access abc.com , then mail.abc.com will not be returned in case of normal storage.

If we use sorted storage then data will be stored like below.

com.abc.www

com.abc.mail

com.ghf.www

If we store like above, then it is easy to access the same.

Sparse Data store

This is mathematical term. If there is null value for particular column , then it will not store.

No SQL Landscape

1.Each No SQL databases as mentioned above is same, they have developed for their purpose.

2.Dynamo is developed by Amazon and it available in Cloud. We can access the same.

3.Cassandra developed by Facebook and they will be using the same. It is combination of Dynamo and HBase, all the features available in Cassandra.

Any No SQL database will have all the characteristics.

It will satisfy only two property at the same time.

HBase Definition

It is a non -relational (NoSQL)database, which stores data in key value pair and it is also called as hadoop database.

1. It Sparse

2. Distributed

3. Multi –dimensional (table name,column name,timestamp) etc..

4. Sorted Map

5. Consistent

Difference between HBase and RDBMS

When to use HBase

When not to use HBase?

1. When you have only few thousand or millions records then it is not advisable to use HBase.

2. Lacks RDBMS commands, if our database requires sql commands then also not go for Hbase

3. When we have hardware less than 5 Data Nodes when replication factor is less than 3, then no need of HBase. It will overhead for system

HBase can run in local system –but this should be considered for a development configuration.

How face book uses HBase as their Message System

1.facebook monitored their usage and figured our what they really needed.

What they needed was a system that could handle two types of data pattern

1. A short set of temporal data that tends to be volatile

2. An ever growing set of data that can be accessed rarely.

1. Real Time

2. Key Value

3. Linearly

4. Big Data

5. Column oriented

6. Distributed

7. Robust

8. Scalable

9. Open source

These are the characteristics of HBase.

HBase is using not only facebook.But also twitter,yahoo etc… they will use to process their large volume data.

Major components of HBase

1. The HBase Master

It will store all the Hbase table and it will coordinate

2. The HRegion server

Actual data will be stored in this server

3. The HBase Client

We will interact to do the crud operations and processing the data

It is same like name node in HDFS

How data distribution will happen in HBase?

We are having data rows from A to Z

Rows Servers

A1,A2 – Region Null - A3 Region server1

B2,B3,B23,B43- Region B2 – B43 Region server2

K1,K2,Z30 - Region K1 – Z30 Region server3

How HBase will write data to the file?

1.Every HBase requires confirmation from both Write Ahead Log (WAL) and the MemStore.

2.The two steps ensures that every write to HBase happens as fast as possible while maintaining durability.

3.The Memstore is flushed to a new HFile when it fills up.

4.Usally Memstore default size 256MB, once it is filled up then , it move that information to HFile it's default size is 64 KB.

5.It will be act as a immutable object.

HBase Read File

1.Data is reconciled from the block cache, The Mem-Store and the HFiles to give the client an up to date view of the rows which client requested for.

2.HFiles contain a snapshot of the Memstore at the point when it was flushed. Data for a complete row can be stored across multiple HFiles.

3. In order to read complete row, HBase must read across all HFiles that might contain information for that row in order to compose the complete record.

HFile Compaction

All HFiles will be compacted and put as Compacted HFile.

HBase Components

1. Region – a range of rows stored together

2. Region servers- serves one or more regions

a. A region served by only one region server

3. Master Server – Daemon responsible for managing HBase cluster.

4. HBase stores its data into HDFS- Relies on HDFS’s High availability and fault tolerance.

HBase Architecture

This architecture will explain you about how Hbase will work.

This is Basics about HBase. My next post you can see How to install and work with HBase.

Thank you very much for viewing this post.